It's been one of those months when possibilities for the future keep going in and out of focus. My secondment ends in August. There might be a possibility of an extension, but there are questions around whether or not I'm allowed to do it contractually. There are also questions around whether or not I want to go back into the classroom at all. Here are some of the things that have happened in the past few weeks that have me up at 5am after a14+ hour work day that should have knocked me out for a full night of sleep...

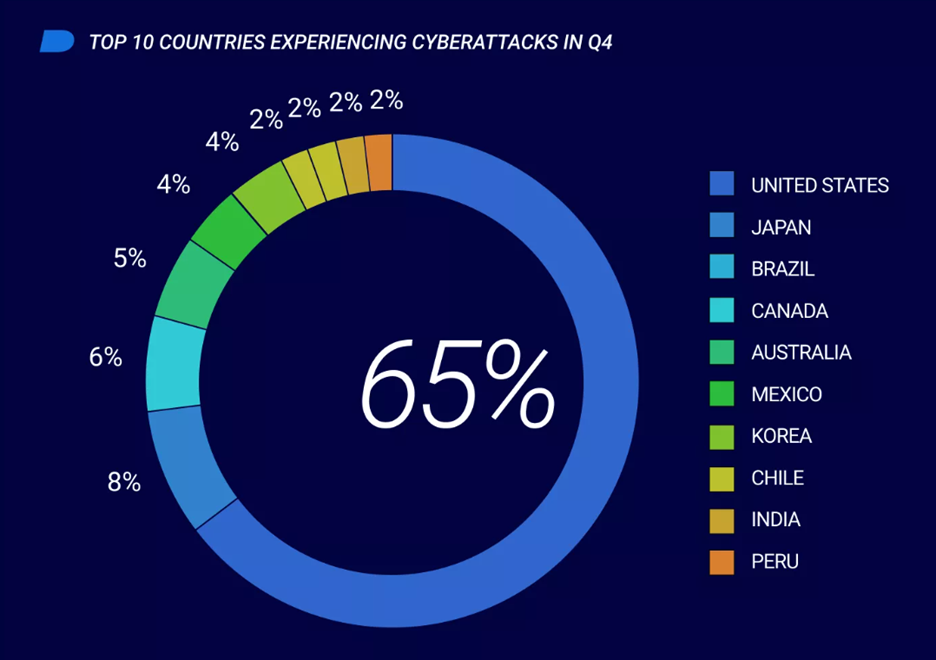

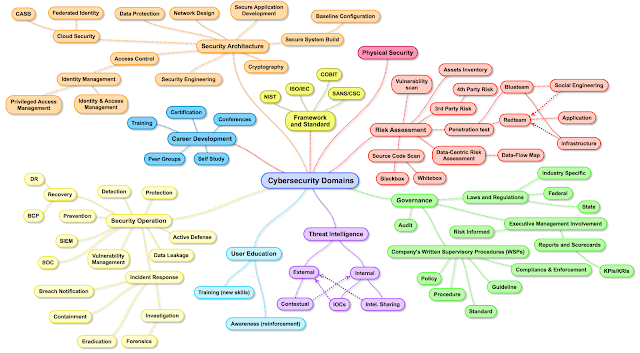

I did a ten day run across the Maritimes a couple of weeks ago. This involved a teacher PD day in Nova Scotia on a Saturday and then in class enhanced technology training days in schools across New Brunswick which mainly focused on trying to leverage the national CyberTitan cyber range competition images from previous years with students with varying backgrounds in cybersecurity. This isn't edtech as you know it, it's leading edge technology being leveraged to teach complex, interdisciplinary ideas that we can't usually get anywhere near in the classroom.

The first day in Fredericton was frustrating due to technical difficulties and pedagogical challenges. Using state of the art cloud based cyber range simulations is always going to be a stretch in classrooms. Doing it on the IT infrastructure in schools is like trying to drive a Formula One car on a dirt road. The range of student skill made it impossible to sufficiently differentiate in order to land everyone in Vygotsky's zone of proximal development and technical issues only complicated matters further. I finished the day exhausted and frustrated.

Day two completely restored my faith in this experiment. Oromocto High School has a brilliant computer technology instructor who has built a strong community of CyberTitans and the computer lab we were in was fit for purpose. We had a great day on the range where I got to see students grasp concepts that even CyberPatriot can't address due to it's old-school desktop virtual machine approach. On top of that I learned I am not alone! Blair, who runs the program at OHS is also Cyber Operations qualified, making us the only two I know of in Canada. Teachers like to invent their own certifications (and degrees) for education technology rather than explore relevance with what everyone else is doing, so it was nice to meet another willing to take on the challenge of a globally recognized industry cert.Over the week I got to iterate with schools with little to no CyberTitan experience and even a middle school. There are edge cases around exceptional teachers where this kind of enhanced learning is not only possible but essential if we're to develop students capable of surviving the very technologically disruptive future we all face. One of my key takeaways in that week was to emphasize the importance of tending to these unicorns, they are few and far between.

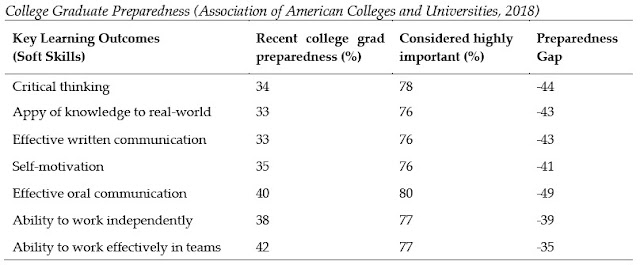

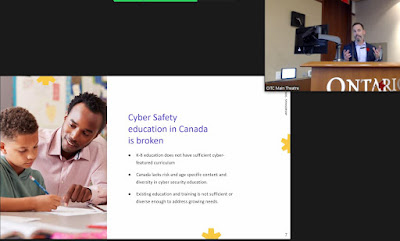

I wrapped up the trip in Charlottetown where our local partner and I had a great chat with CBC radio about how to build genuine cyber-fluency. This is like starting a fire with wet wood. It takes skill, determination and collaboration to make it work, and none of these things are easily found in Canadian education. Having now taught in classrooms from BC to Newfoundland, I've been fortunate enough to experience the wildly inconsistent landscape of Canadian education (there is no such thing, we are the only developed country in the world without a national educaiton strategy), but there are commonalities, like the staggering lack of digital skills we graduate students with. Nurturing local expertise is a way to scale this up. I hope administrators from coast to coast recognize and focus on that.

I finally cracked the TV egg and found myself on CBC Compass. The final question there was a big one, but I stand by my answer: we need to be teaching meaningful digital literacy so that our students can operate safely and effectively in an increasingly technology dependant world. We indeed face global challenges that threaten our future. If we don't start learning the tools at our disposal effectively, we're not going to solve them.

|

| The frozen sea on an empty PEI shore... |